Make me think

Another divide in the AI dialogue: propensity for thought

Project assumptions versus implementation details. Genre conventions versus Oxford commas. System architectures versus code. These are not the same thing. Clearly. Unfortunately, they are often treated as such. And when they are communication gets garbled.

This tendency of communicators to talk past one another—to parlay at different tiers of abstraction—can be mitigated with good practices. For example, by explicitly scoping a dialogue to a specific stage or resolution. However, such constructs are both difficult to configure and hard to adhere to in real-time. Thus: good communication is hard. Nowhere is this more apparent than in the seething storm of discourse concerning artificial intelligence and its applications.

One of the keystone divides in the AI discourse involves centaurs and butlers. Loosely: seeing artificial intelligence as an augmentation versus as an enslaveable agent. Most people have a disposition aligned to one stance or the other. So, when two people with such divergent orientations talk AI the outcome is often heat, not light. Another keystone chasm in the discourse that’s emerging? Propensity for thought.

There’s this seminal book on web design and user experience. It’s called Don’t Make Me Think. I haven’t read it but I will TL;DR it like I have:

Usability means minimal cognitive effort is required by a user

This is a high-context heuristic, not a universal law. Absence of friction can cause one to move too fast and break. Cognitive speed impairs the seeing of certain phenomena. Yet, perceived through the prism of AI, this rule of thumb achieves escape velocity from the domain of design. It points to another salient stance regarding AI: to think or not to think.

One example: many people have noticed that ChatGPT’s image processing abilities can make the accessing of electrical component specs less nightmarish. Just snap the serial and get the specifications. Another: for approximately two years I had a TODO in my Roam Research that involved enumerating the titles, authors, original volumes and genres of all the eighty texts in Penguin’s Little Black Classics series. It would’ve taken me an hour or two to do the same manually. Instead, it took five minutes with a little prompting.

That’s using AI to avoid thought. Let’s invert. How can AI be used to invoke thought?

I was recently reading about analog computing. And as I have only basic competency interpreting circuit diagrams I often ignore them. Not with ChatGPT. I took a picture of the circuit, flung it into the compute-maw, and prompted for an explanation. Then I moved on.

Another example? Agile development uses user stories and acceptance criteria. Well, it turns out that an LLM is well suited to steel-manning such constructs. Basic prompts—”evaluate this user story and its acceptance criteria from the perspective of a developer with no understanding of the project”—reveal a lot. As do more targeted, domain-specific ones—”evaluate this user story and its acceptance criteria’s suitability for a HIPAA-compliant product.” Non-LLM thought invocations exist, too. This is exploratory machine learning within academia. This is observability engineering in industry.

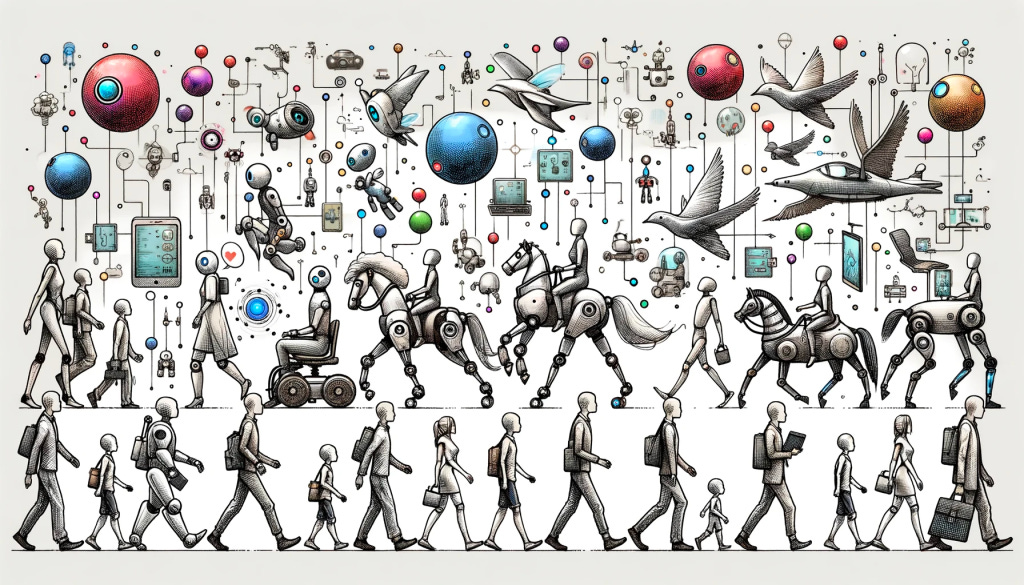

Now, take these two divides—centaur-butler, think-don’t-think—and slam them together. This conceptual collision produces four distinct stances one can employ and explicitly signal when dialoguing about artificial intelligence (and when deciding to make use of the related tooling). I will characterise each by the sort of persona the stance embodies.

If one’s stances are AI-as-Centaur and Make Me Think then one gets a disembodied co-creator. An entity to influence and an augmentation to be influenced by. If one’s stances are AI-as-Centaur and Don’t Make Me Think then one gets an agency amplifier. A trusted outsourcing partner for accomplishing necessary-but-not-sufficient tasks for desirable outcomes. If one’s stances are AI-as-Butler and Make Me Think then one gets a tireless midwit assistant. Ad hoc access to indefatigable mediocre reasoning. If one’s stances are AI-as-Butler and Don’t Make Me Think then one gets a compute slave. A coarse, compute-intensive tool for completing demeaning, legible, unimportant actions.

These two divides and their inherent stances are ultimately about equity and outcomes. Is a sufficiently advanced, non-human intelligence equivalent in status to humanity, or inferior? Is artificial intelligence tooling—no matter how simple or complex the underlying substrate—something one uses to ask questions or to find answers?

These used to be fringe concerns. The playthings of philosophers, researchers, outsiders and the like. Yet now, they’re becoming (or re-emerging as) the fodder of mass culture. And I’m skeptical about where that takes us when we can’t or won’t engage critically with these emerging models of the world. Nevertheless, my hope is that an expanding awareness of one’s stances and intent with regards to these phenomena can introduce a dose of nuance.