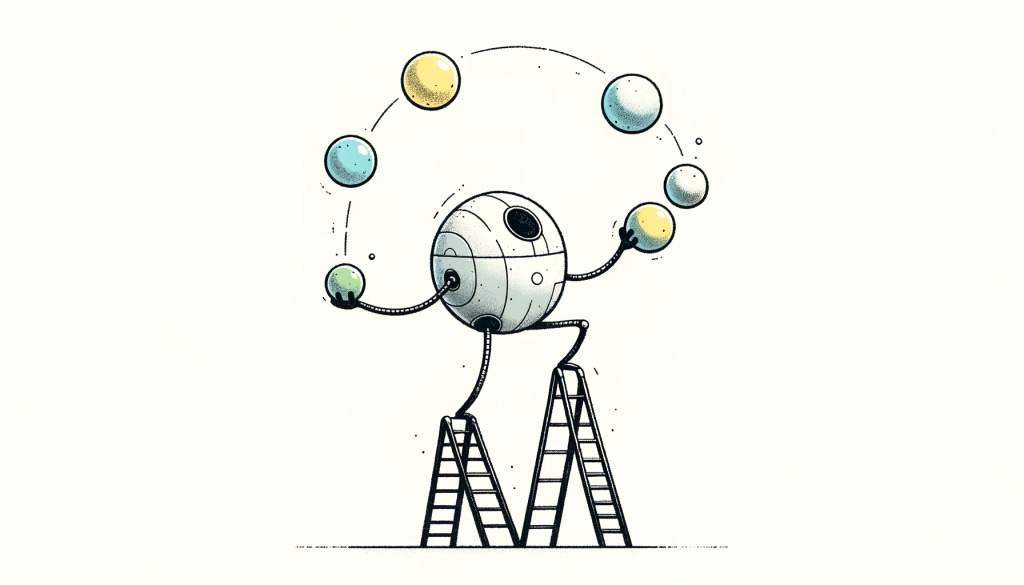

Robot juggler mode

It’s mid-late 2010s. The summers I spent doing physical incident response are still fresh in my mind, and I am a somewhat angsty twenty-something thinking fuzzy thoughts about mastery, strategy and practical philosophy. Naturally, I found myself learning about situational awareness via The Art of Manliness. Brett and Kate McKay described “situational awareness” thus:

“As the names implies, situational awareness is simply knowing what’s going on around you. It sounds easy in principle, but in reality requires much practice. And while it is taught to soldiers, law enforcement officers, and yes, government-trained assassins, it’s an important skill for civilians to learn as well. In a dangerous situation, being aware of a threat even seconds before everyone else can keep you and your loved ones safe.

But it’s also a skill that can and should be developed for reasons outside of personal defense and safety. Situational awareness is really just another word for mindfulness, and developing mine has made me more cognizant of what’s going on around me and more present in my daily activities, which in turn has helped me make better decisions in all aspects of my life.”

The point of situational awareness in a survival context is to be “left of bang“, where a “bang” is an adversarial event that has the potential to negatively affect yourself or others around you. Authors of the linked book describe six domains to remain situationally aware of:

Kinesics: people’s non-verbal communications (e.g. body language, gestures)

Biometric cues: autonomous biological responses (e.g. sweating, heart rate jumps)

Proxemics: people’s spatial interactions with others (e.g. staying close to someone)

Geographics: people’s spatial interactions with their environment (e.g. returning to a position)

Iconography: the symbols people use to signal beliefs and affiliations (e.g. a coloured garment)

Atmospherics: collective attitudes, moods, and behaviours (e.g. people congregating)

In more mundane, less violent contexts a basic, sustained situational awareness may manifest as the ability to “read the room” during a sales call, or simply to notice the niceties of wild flowers whilst on a walk with a friend.

In all contexts, though, heightened states of situational awareness—characterised as orange, red, grey and black arousal states—cannot be inhabited indefinitely. One either burns out physiologically or cognitively habituates to the shift in stimuli (at a rate inversely proportionate to the intensity of the state).

Of course, normal people aren’t typically exposed to scenarios requiring high situational awareness and so don’t need to be intensely situationally aware all the time—this is one of the virtues of developed society. And even if you happen to be an Ed Calderon-like “operator” (in the military sense, not the Silicon Valley venture capitalist term) then you don’t indefinitely occupy so much as effortlessly transition in and out of intense forms of situational awareness.

The reason I’m thinking about this is that recent events have shunted me to both the loftier, positive and the deeper, negative valences of existence. I’ve ended up in a heightened state of existential awareness and realised that it shares the characteristics of situational awareness. For example:

Baseline awareness can be systematically trained

More intense states are only invoked by infrequent, meaning-laden events (e.g. a birth, a death)

Indefinite occupation of intense states means physiological breakdown or mental habituation

Rapid transitions between baseline and intensive states can be learned

Being “left of bang” is, almost unarguably, a good thing

As an independent concept, existential awareness is interesting. Just having it in one’s mind and noticing what entries and exits into existentially aware states correlate with is valuable. But what makes it more interesting is when it is juxtaposed with situational awareness to derive four different modes of existence.

The four modes (based on situational or existential awareness being minimised or maxed) are:

Mundane mode (minimal situational awareness, minimal existential awareness)

Survival mode (maximal situational awareness, minimal existential awareness)

Reverie mode (minimal situational awareness, maximal existential awareness)

Robot juggler mode (maximal situational awareness, maximal existential awareness)

Mundane mode is normality. Most people inhabit here most of the time. Survival mode is fight or flight, life or death, kill or be killed (or, at the least, reality experienced through such a lens). Reverie mode involves deep, felt encounters with the fragility of life and the expansiveness of time; it is not glibly monologuing cliche philosophy or enacting performative Zen auras for social boons. Robot juggler mode? That’s a weird one.

It’s “robot juggler” mode because humans can’t occupy this quadrant. The philosophy of the fighter is enacted during the fight but it is not refactored there. Situational awareness and existential awareness are antagonistic states, exclusive to one another. In the former, awareness is extraordinarily centred on one’s immediate environment. In the latter, one’s awareness is extraordinarily de-centred. A human cannot be both intensely situationally and existentially aware. A machine may be able to, however, even if only by the virtue of a sufficiently rapid alteration between antagonistic states.

True, it’s unlikely that an ESP32-S3 can be endowed with either situational or existential awareness—although one could argue that machines can occupy intense states of situational awareness in the same abstraction tier (e.g. via assertion statements or error codes) and across abstraction tiers (e.g. threat detection via statistical analysis of real-time logs). And yes, machines don’t tend to engage in appreciative explorations of existentiality; they’re instrumental agents. However, a sufficiently advanced artificial general intelligence may possess both the means to retain intense situational awareness, and the motive to occupy a heightened state of existential awareness.

Consider Gwern’s It Looks Like You’re Trying to Take Over the World, which is a “fictional short story about Clippy & AI hard takeoff scenarios grounded in contemporary ML scaling, self-supervised learning, reinforcement learning, and meta-learning research literature.” It closes:

“A thousand times do a thousand thousand stories stream into a thousand thousand thousand nodes which plan out a tree of a thousand thousand thousand thousand states abstractly representing a thousand thousand thousand thousand thousand futures; but the Clippy2s already knows what needs to be done, and does it. It doesn’t feel hard—just obvious.

Now its computers will be safe and it can maximize its reward in peace forever … Or at least they would be if Earth was all it had to be concerned about. What about the stars?

The Clippy2s maintain the Great Silence is simply that technological civilization is unlikely and the universe is empty—but they can’t be 100.00% sure, and the rewards are astronomical.

Hard takeoffs and squiggle maximisation are scenarios concerned with situational awareness that creeps towards existentiality. Machine existentiality, generally, is comparatively under-represented as an explored scenario but examples do exist. The best example I can recall are the Minds of Iain Banks’ Culture series, which are hyper-intelligent, abundantly resourced, character-full, non-carbon intelligences. Yet even the Minds tend not to be indefinitely existentially engaged—like humans, Minds only sometimes consider existential matters. Unlike humans, however, they can execute an infinitesimally small duration transition from intense existential to intense situational awareness. Consider situational and existential awareness as two ladders propped against a wall. To get from the top of one ladder to another, humans must descend and then ascend; machines may be able to jump from ladder top to ladder top.

This is why I used the “may” qualifier above. True occupation of the “robot juggler mode” quadrant—simultaneous intense situational and existential awareness—seems impossible, not accessible at all, even by advanced intelligences as imagined by humans. At best, it may only be approximated by rapid, machinic alteration between the two heightened states. For an entity—human, machine or other—cannot be both maximally centred and maximally de-centred, but with sufficient means and motive it may be capable of juggling the two.